26

Sep 15

How to Unoptimize Your Content for Maximum Rankings

It’s often said that you should simply “write great content for your users” in order to do well from an SEO perspective. I think it should be more correctly stated as: “Write great content about a topic that your users care about and which few have done a good job addressing”. There are many aspects of this definition that will be interesting to explore, but what we’re going to address in this article is how to write content “about a topic”. I would argue that the most important step in content optimization is a final “unoptimization” step you should always perform before finalizing any document – to ensure you’ve successfully made the document be *about* the topic you’re targeting.

*Crazy Horse Monument photo credit – author: http://www.flickr.com/people/navin75/

Making a Document be “About” a Topic

What does it mean for a document to be “about a topic?” If you set out to write a document about [art garfunkel], how do you know whether what you wrote is really “about” that term?

Well, those of you who (rightly) believe in keyword density would probably argue “well, if I say [art garfunkel] enough, then presumably the document is about [art garfunkel]”. So, one definition of “about” could certainly be

The document mentions the topic, maybe even quite a bit.

Like Danny Noonan and Luke Skywalker, I’d like you to take your eye off the ball for a minute. (Let go with your feelings Luke! Be the ball Danny! Na-na-na-na-na-na-na-na-na!). Another, somewhat mind-blowing, negative definition of “about” could be, instead:

The document is not about anything else in the entire universe other than the topic, much.

Think of Michelangelo carving the statue of David out of a block. Some would say that he didn’t carve a statue; he simply carved away all of the parts of the block that *didn’t* resemble the statue. Understanding this concept is the key to optimizing your content – both to satisfy your users *and* to rank highly.

Let’s Walk Through This With a Thought Experiment

If you set out to write a document about [art garfunkel] and it mentions [paul simon], that’s probably appropriate and expected by your audience; to ignore Art Garfunkel’s early career would be to do a disservice to your readers.

But if your document mentions [paul simon] twice as often as it mentions [art garfunkel], I would put it to you that the document is very likely a *lot more* about [paul simon] than it is about [art garfunkel]. It might rank well for [paul simon], or [simon and garfunkel] but it won’t do as well as it *could* on [art garfunkel] if it were optimized to have that term be the most prominent one.

Think about it. If a writer targeting [art garfunkel] tries not to mention [paul simon] too much, that’s a pretty reasonable start at making the document be about [art garfunkel], isn’t it?

Of course, there are other signals that a document is about [art garfunkel], including anchor text, meta-tags, and so on. Probably peppering in some Art Garfunkel-related words like “poetry”, “actor”, and “tenor” would be a smart idea. Word stems are always great to pepper in, if possible, but I don’t know what the past participle of “Garfunkel” is, you’re on your own with that! 😉

Certainly though, you should try to remove occurrences of [paul simon] if you have too many of them. That’s what I mean by “unoptimizing”.

Now Let’s Look at a Real World Example

Let’s look a SERP for the search term [goofy]:

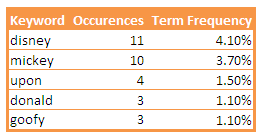

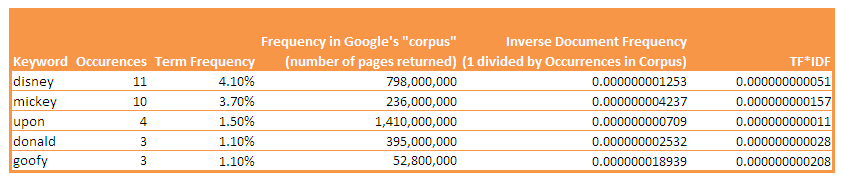

Although this document from go.com is ranking #7 for [goofy] which is pretty good (I’m not showing the other results), it could probably do somewhat better if it were a little more about [goofy]. A quick frequency examination, using textalyser.net, shows that this document doesn’t appear to be much about Goofy at all – [goofy] is mentioned 3 times, but [mickey] is mentioned 10 times (and Disney 11 times!):

But Does Google Use Keyword Frequency? No, But Probably Something Like It!

TF-IDF means “Term Frequency-Inverse Document Frequency”, and it’s a statistical approach to figuring out what a document is “about”.

It’s likely that Google is either using this approach, or something similar, to help figure out what documents are “about”. You won’t find “keyword density” in any Google patent applications, but the term “TF-IDF” appears in numerous key Google patent applications (sometimes it’s also referred to as TF*IDF or TFIDF). It’s such a common approach in the academic world and in the literature, it would be shocking if Google isn’t using it or something roughly equivalent, to some degree, for a variety of purposes, including ranking.

Given that, one could argue that if Google is using something along the lines of the TF-IDF type approach, then rather than simply measuring keyword density, we ought to try doing the same. Doing so would likely promote [goofy] and demote [mickey] a bit if we take that into account; let’s do the calculations and you’ll understand why.

How to Do a TF/IDF Calculation

It sounds complicated but calculating TF-IDF essentially amounts to:

a.) first you calculate the keyword density for each keyword in the document, and sort the keywords.

b.) then you adjust the sorting based on how commonly the word appears in a “corpus” (collection) of documents.

Very common words will get adjusted down in their frequency ranking, and very rare words will get adjusted upwards.

So, let’s assume the “corpus” Google has access to is about 1 trillion documents, give or take (i.e. the whole internet). A quick search for the word [the] tells us that [the] appears in 25,270,000,000 documents (i.e. about 25 billion).

[the] is a very common word; in fact, if you use the word [the] frequently, your document probably can’t really be said to even be “about” the term [the]. Let’s say your frequency of [the] is 5%, i.e. you’ve written 500 words and used [the] 25 times, for instance.

To adjust the frequency by using the inverse document frequency, we would take .05 and multiply it by (1/25,270,000,000), and get .000000000001979 . For a TF-IDF number, that is *very* small relative to other TF-IDF numbers (if we were to calculate them – we’ll show some others later), so we can tell a document with [the] in it for 5% of the words is not very much about the word [the].

If you adjust words for their IDF values (assuming you can either pick a good corpus, or just query Google a lot to see how many documents it has in each case), you can see that what people commonly call ‘stop words’ like [the] will get re-sorted down any frequency listing pretty quickly, while relatively rare words will move up somewhat.

Figure 2 shows the frequencies we found for various terms in the goofy/mickey document from go.com, and the top terms’ TF/IDF scores, based on how many documents Google reports they appear in:

Initially you may think looking at the table that the document is, relatively, more about [mickey] than [goofy] based on frequency alone, but when you look at the adjusted TF-IDF scores…sure enough, the document turns out to be more about “goofy” (a relatively rare term) than it is about “mickey”, even though “mickey” is mentioned more times!

Similarly, an article that mentions a horse (a common term) 11 times, and an iguana (very uncommon) 3 times, may be considered to be more about”iguana” than it is about “horse”.

So, you could argue that [goofy] is a much rarer term than [mickey] and it would probably get promoted somewhat by the “IDF” portion of Search Engine’s algorithms. This may partially explain why this document is ranking well for the term [goofy] already.

Should This Document Be Optimized Further?

You might even speculate that this document doesn’t need much more optimization, based on this. This is a tough call. Personally, I would *still* knock out a few “mickey”‘s and “disney”‘s, and throw in a few more [goofy]’s, but that’s just how I roll – your mileage may vary. Of course, you might argue “but this page might bring in some traffic on the term [mickey] as well. It may seem likely, but as it turns out, [mickey] is an extremely competitive term and I couldn’t find this page ranking anywhere for it. So I would probably tweak it a bit in [goofy]’s direction.

How much should it be tweaked? Well, that’s easy – just enough to rank better. If I make [goofy] more frequent than [mickey], that may actually look a little suspicious. But if I were to dig into what the top 2-3 ranking documents have for keyword frequencies for [goofy], then it is probably relatively safe for me to target whatever frequencies those top ranking documents are using. I’ll leave that as an exercise for the reader.

Should You Worry About TF/IDF?

This example may make you think “gee, should I worry about IDF all the time?”. It certainly seemed critical in this case.

The answer is a resounding *no*!!! From my experience, when you start analyzing 2, 3, and 4 word terms, the adjustments all end up being *virtually the same*.

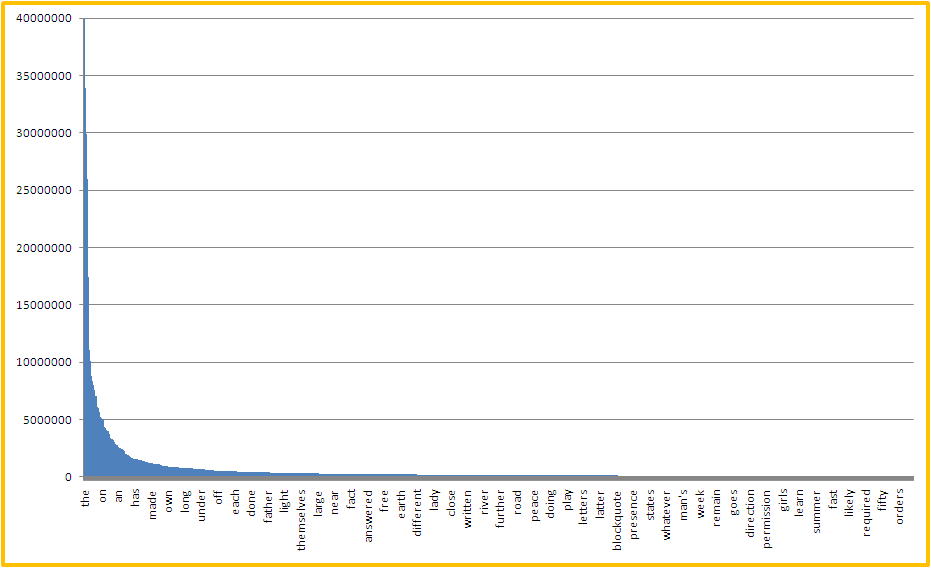

If you graph the frequency of occurrence of terms in documents, you can see very quickly that it is a very “long tail” curve, and the further out you go, the flatter it is. For example, I took the term frequencies for the most frequent 1,000 terms found in Project Gutenberg files (courtesy of Wiktionary), and graphed them in Figure 3. Note that Excel only lists the occasional label on the x-axis (it can only fit so many) but you will get the general idea:

When you go further out, to 15-20,000 terms and beyond, and start including 2, 3, and 4-term phrases, the curve gets extremely flat. So flat, that eventually you get to the point where, if one term appears 10 times and the other term appears 6 times, *** that difference will dominate the calculations*** , rather than the IDF numbers that you’re multiplying them by.

Eventually, looking at TF and IDF, the “TF” term (or in other words, the keyword frequency) *dominates* the result for terms that you’re likely to care about.

So for longer-tail terms (pretty much anything you’re likely to be optimizing for), you don’t need to worry about IDF at all. For all practical purposes it may as well be a constant. If it makes you really happy, select “1” as your constant – there you go, you’ve just adjusted Term Frequency and calculated TF/IDF by doing nothing to it.

If you’re optimizing for a single word term it might be worth taking a look at IDF, but most of us in the SEO realm don’t really deal with single word terms in SEO efforts very often, so I wouldn’t worry about IDF…much.

Keyword Density Analysis Is Useful For Identifying Any *Unintended* Targeting

Have you ever attended an event where a speaker used the word “synergy” 15 times in the speech? The human brain seems to have a “word of the day” feature; I think we all do this to some degree – we’ll tend to use a word or phrase, unconsciously, a bunch of times without realizing it.

The problem is, this happens during your writing process as well. For example, I tend to find that I use the phrase “for example” a lot (probably in this very article). If I were to use it more often than whatever I’m targeting, then I run the risk that Google or Bing might think my document is about “example”, not “unoptimization”. If you’ve never done a keyword density analysis of your work, you should run a few – I think you would be surprised what phrases are accidentally prominent because you’re using them unconsciously.

Another problem can be, often you’re creating multiple pieces of content in a niche, where several of the terms may legitimately belong together, or can’t even really be written about without mentioning each other ([fishing rod] and [casting], for instance). If you’re making two content pieces, and you can’t write both documents without mentioning both phrases in each case, keyword density analysis can be a *great approach* to help you angle (so to speak) which document is “about” which concept.

Unoptimization – The *Final* Step in Content Optimization

So, once you’ve determined your optimal document length, keyword density, word stems, and related words, and have drafted your document, there’s a final *VERY IMPORTANT* step you need to do.

I know of no one in the industry who has identified or written on this, but to me, it’s the final key to content optimization:

1.) Run a keyword density analysis (I use textalyser.net for this)

2.) See if any keywords rank *above* the term you’re targeting

3.) Unoptimize the document for those keywords.

You can “unoptimize” by removing occurrences of those keywords, replacing them with equivalent phrases, and *maybe* putting in your target keyword a few more times (be careful, you don’t want to go crazy with it).

Keep repeating this process until the target keyword is the most prominent keyword in the document.

In Conclusion

Creating engaging, helpful content that users want is important, and a good first step is to actually make the document you’re writing be *about* whatever you’re trying to write about.

Whether there’s an “optimal” keyword density you should be targeting for a particular keyword you’re trying to rank for is a topic for another article down the road. The answer, by the way, is a resounding *yes*, regardless of statements by Google and others to the contrary. I know, I know….keyword density haters gonna hate, so go ahead and comment below if you need to get it out of your system…rest assured I’ll revisit that topic when I have more time.

At a minimum though, Keyword Density analysis is a *great* way to see, relatively speaking, what the document you’ve written actually appears to be about, and is an extremely important analysis to do before finalizing any SEO content – if only to make sure it’s not accidentally “about” something unintended.

![goofy1 SERP Result for search [goofy] (Only Position 7 Shown) *** click to enlarge ***](https://coconutheadphones.com/wp-content/uploads/2012/03/goofy1.png)

this has got to be the most technical complete article on this I’ve seen. thanks.

In the past I’ve noticed rogue keywords showing up high in Google Webmaster Tools.

With some investigation I can find out why and remove the cause.

In several cases it has been text in image alt attributes, used repeatedly in the page templates.

I’m not sure how closely the keyword list in GWMT relates to the real algo, but SEO can be all about minimising risk and fixing the little things.

Thank you Ted; this is a very useful post. I’m starting an on-page optimization project for a client and am looking forward to experimenting with these techniques. Especially like the idea of doing a final keyword density analysis. Great stuff!

For years now I’ve been taking a very light approach on keyword usage on-site but this post showed me what I was overlooking – all those other phrases that can inadvertently take prominence over your target terms.

Awesome stuff!!!

Great discussion about how to write useful content without being sleazy about SEO, and at the same time being conscious that you are targeting the right terms. Thanks!

Interesting Ted.. I would like to add that on top of the TFIDF, Google may possibly be using a form of a reader-analysis test, much like the Flesch-Kincaid. I figure that not only must they look at the prevalence of a particular keyword, but the readability of a website to prevent over-optimization. Your thoughts?

I agree completely, although it not even be that Google explicitly uses reading level (although they are exposing that to Webmasters now I think through Webmaster tools, right?). They may be sort of indirectly using it, by measuring bounce rate. There are some thoughts on this front from a couple of really smart guys here 😉

http://www.thesearchagents.com/2009/11/does-reading-level-matter-in-seo/

Just when I thought I had at the goal post is moved….again… This is an interesting article and very in depth. As you mention in the conclusion “Creating engaging, helpful content that users” this to me is one of the most important aspects and at least for now with all the changes from Google it is were your hard work pays off.

Great article, everytime I find my way back on your site I wonder why I read any other posts. You have a way of presenting seo to non seo savy people, and you don’t use the site to sell affiliated products like so many other people do, so transparently. I’m going to try the text analyzer.

Interesting point of view. I bet many readers were surprised while reading the word “unoptimize”. 🙂 However it is a very common mistake to overoptimize the website.

Thank you Ted; this is a very useful post. I’m starting an on-page optimization project for a client and am looking forward to experimenting with these techniques. Especially like the idea of doing a final keyword density analysis. Great stuff!

There’s been a lot of talk in the SEO community about TF-IDF lately, so thought I’d return to this – it was my introduction to the topic some four years ago. Still a great article! These days it’s handy to get this information from URL Profiler during content audits.

Really good read, Ted. It’s all about topical and intent based keyword analysis. If there is one term is used many times on a page which is not relevant with the term or intent we are targeting on that page, then we need de-optimize the page for that term.

Although my brain is fried now, I learned a lot about KW density. I try to let content read as natural as possible but worry sometimes whether it’s too dense or not.